For the past few weeks I’ve been chasing some issues in my production ConfigMgr environment related to Windows 7 to Windows 10 upgrades and Windows 10 Feature Updates and SCCM Hardware Inventory. During that process, I found that several of my clients had successfully been upgraded, but were still reporting the old OS version in the ConfigMgr console. My search led to several root causes which I’m still working through, but the solution in most cases was to force a Full Hardware Inventory on the client or fully uninstall, clean and reinstall the client - I also won’t go into those steps here. I really just want to share a few of the things I did to identify clients with ‘broken’ hardware inventory.

Incorrect OS Version

I had deployed the Windows 10 1809 Feature Update to a collection of Windows 10 1803 devices and added the Operating System Build column in the SCCM Console. Then I would connect to the devices remotely using Recast Right Click Tools and Client Center for Configuration Manger by Roger Zander to force any pending updates to install. Whenever it appeared that the clients had already installed the update, I would run some PowerShell to check for the OS version and many times, force a reboot and hardware inventory collection. I began to notice that several machines never showed the correct OS version and started digging. Checking Resource Explorer in the SCCM console, I would see that the client hadn’t updated the Operating System Hardware Inventory class for a few days which led me to believe we had an inventory issue.

Bad MIFs

Whenever Hardware Inventory - among other client processes - runs on an SCCM Client, the client sends back a MIF file. This file contains the data that will be inserted into the database records for that machine. These MIF files are dropped into folders on the site server called Inboxes where they are picked up according to certain intervals (or some inboxes are triggered by new files being added) and processed (there are other file types that are managed by the inbox processes as well). In the case of Hardware Inventory, whenever the MIF is invalid for any number of reasons, the file is moved to one of several BADMIFS folders. Depending on the failure, most failures simply result in the client being notified that it’s last inventory was invalid and that it should resync or send in a new full inventory file instead of the delta that it generally sends.

In my case, I used the ConfigMgr Inbox Monitor by Robert Marshall tool to see that I had over 500 MIFs in the various BADMIFs folders. I began opening the MIFs in Notepad and could read out the device name from the second line of the file and go check that device in the console to see if I could sort out what the issue was. As I mentioned, most bad inventory will resolve itself. So my challenge was to figure out which MIFs represented devices that were having repeated inventory issues. For more on MIFs, check out this in-depth post from Umair Kahn from Microsoft.

SQL Magic

First - let me start with a disclaimer on this section (see links at the end for more info from Garth and Benjamin on this). The views and tables that I’m going to use are technically ’not supported’ by Microsoft in that they shouldn’t be used in reports or used to share out to others. The main risks in using these are that 1) some day the view could change and would break your report 2) the view likely doesn’t have the correct permissions on it and you should NEVER change permissions on your ConfigMgr Database. (Yeah, Yeah - I know some of you do change them, but that’s just silly and you shouldn’t do it)

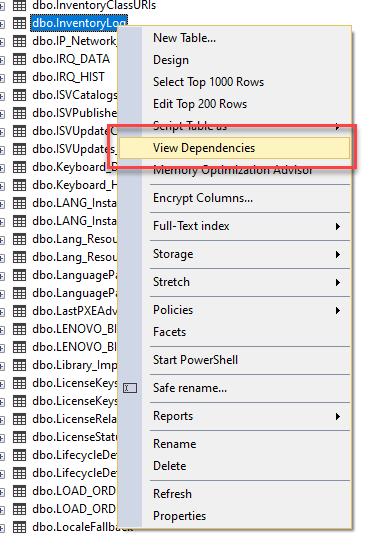

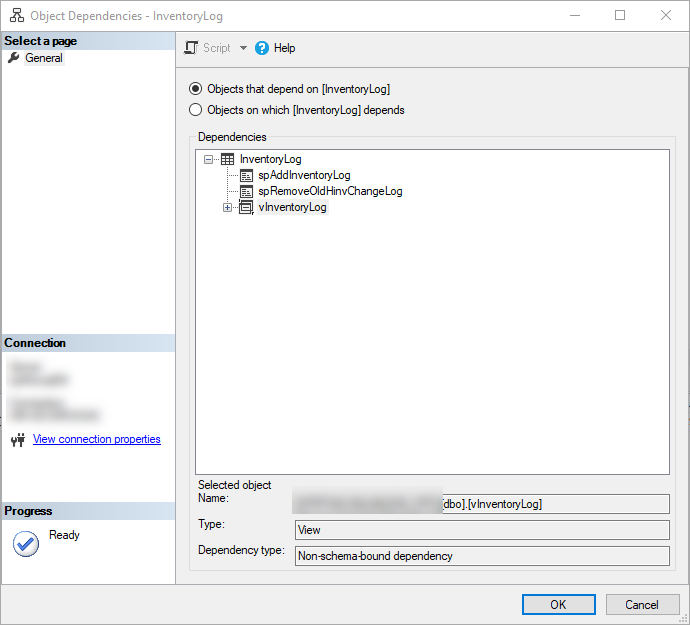

I started digging through the database and found the InventoryLog table and started playing with it. I discovered that it matched up nicely with the machines I was finding in the BADMIFs folders. I decided that this table could be the ticket to sorting this out. One great thing about the SCCM Database is that GENERALLY where there’s a table, there’s a view. You should always use the v_ views when they are available. If you can’t find a view that matches, like v_InventoryLog, check for vInventoryLog (which is the one I found). You can also right click on the table or view in SQL Server Management Studio (SSMS) and click View Dependencies.

Since I didn’t know anything about the the table, I validated a few things first. I ran SELECT DISTINCT ResourceID FROM vInventoryLog WHERE Name IS NOT NULL and the total count matched up with my total device count (within reason). I also opened up the view in SSMS to see which tables/views it was using. Here’s the view contents. As you can see, it’s doing some cool math and using InventoryLog and System_DISC. This is perfect.

| |

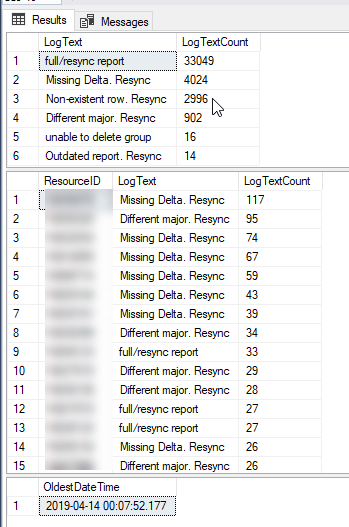

Next I used these 2 queries to show me how bad things may be. The first shows the count by LogText value to give us the total of each type of inventory is being reported. It also gives us a list of possible values to work with. The second adds in ResourceID to show devices that have had repeated failures. The third shows the oldest record in the log - which is about 90 days old.

| |

From this data, I can see that within the past 90 days, I’ve got several machines that are repeatedly sending in bad inventory. Next we need to find the max LogUtcTime for each device where the LogText is full/resync report. This will enable us to determine the last time a device reported a valid inventory.

Now that we know the last time we had a good inventory reported, we can find all of the devices who’s last inventory was NOT good. To do this, we can’t just flip the previous query around and use WHERE LogText <> 'full/resync report' because this just give us the last time inventory failed for the device but doesn’t tell us if the failure was the last thing reported for the device.

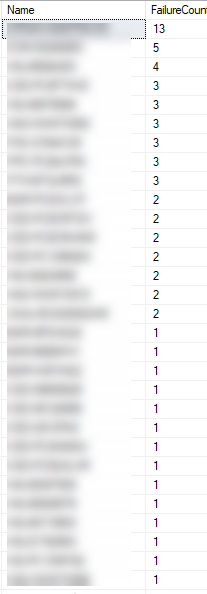

This query will give you a list of every failed inventory log entry by device where the failed entry occurred AFTER the last successful inventory was reported for that device. It also includes the failure count since the last successful inventory (the total is included on each row for a given device).

| |

to give me a DISTINCT device list that I could drop into a collection in SCCM and target with remediation steps.

| |

You’ll notice the low failure counts on many of the machines listed. When I began, I had many more with much higher counts. The ones with 1-3 failures don’t concern me since I’m sure they will auto-remediate on their next inventory attempt, but the higher counts may require a bit more attention.

Summary

Well, this was supposed to be a short post, but I never can get away with that. There’s just too much good stuff to share (so I don’t forget it 6 months from now as well). Hopefully this post has given you some thoughts on how you can keep an eye on your inventory. I do believe that the increased numbers of failures I’m seeing are directly related to In-Place upgrades from Windows 7 to Windows 10 and in other cases related to devices changing from KMS to Subscription activation for Windows. I’m still chasing down the latter. Please let me know if I missed anything or share any improvements that you can come up with.

Special thanks to Garth Jones and Benjamin Reynolds for their excellent blogs and assistance as I waded through all of this. Here are some great blogs that they’ve written around these issues that I used for reference.

https://www.enhansoft.com/what-are-the-supported-sql-server-views-to-use-with-sccm-reporting/

Plus a Reddit post that asked about InventoryLog at the same time I was dealing with this https://www.reddit.com/r/SCCM/comments/ccqomn/sccm_db_inventorylog_table_questions/

And 2 related Twitter threads

https://twitter.com/AdamGrossTX/status/1150863800456884227

https://twitter.com/AdamGrossTX/status/1149065393794879488